UP LAW SENIOR LECTURER SPEAKS IN PANEL REGARDING DE-PLATFORMING EXTREMIST CONTENT BY SOCIAL MEDIA PLATFORMS

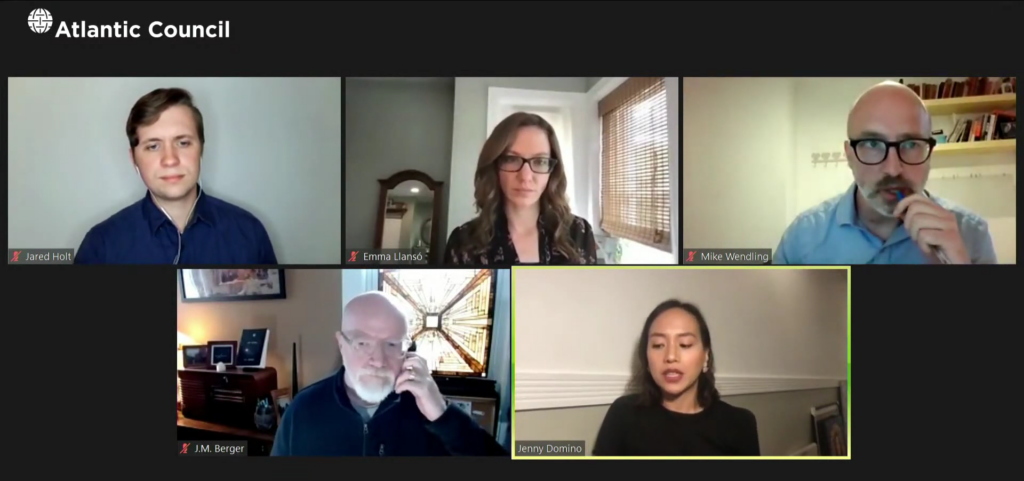

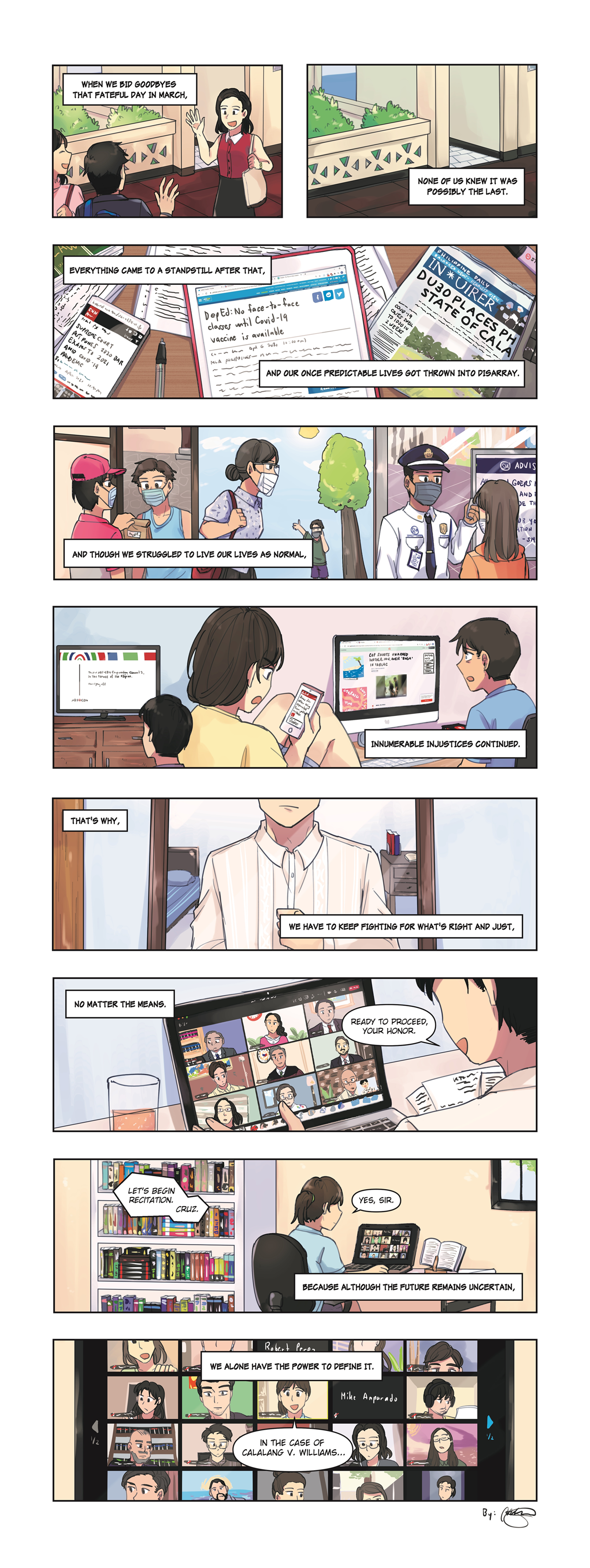

The third installment of the Atlantic Council’s “After the Insurrection” series, examining how the January 6, 2021 attack on the US Capitol is shaping the response against domestic terrorism threats by white supremacists and anti-government groups, titled “After the Insurrection: De-Platforming Violent Extremists: When, Why, and Why Not?” was held on May 11, 2021, 1:00 – 2:30pm EDT (May 12, 2021, 1:00 – 2:30am PHT) over Zoom. Among the speakers for this event was Prof. Jenny Jean B. Domino, Associate Legal Adviser for the International Commission of Jurists and a Senior Lecturer at the UP College of Law.

Answering the moderator’s questions, Prof. Domino shared her thoughts on the result of Facebook’s Oversight Committee’s review[1] of former U.S. President Donald Trump’s indefinite suspension from the platform, the importance of understanding the context in defining what is extremist speech from place to place, and how the persons in charge of moderating content perceive the severity of events outside the U.S. differently.

In particular, Prof. Domino referred to the article[2] she wrote on the matter, and she drew comparisons between Facebook’s actions against former U.S. President Donald Trump after the January 6 insurrection and the Myanmar junta before and after its February coup. She noted that while the events themselves were different, their proximity to each other was interesting, as well as how Facebook responded to them. Both events started out with allegations of voter fraud; the primary divergence was that in Myanmar the democratic government in place was overthrown by the military and a junta installed in its place. She stated that the de-platforming of some of Myanmar’s military generals had started back in 2018, yet Facebook never stated any Community Guidelines presumably violated to justify the response and merely relied on the UN’s Fact-Finding Mission Report about how the platform had been used to spread hate speech in the country; she believes that this lack of specific guidelines or rules and their subsequent application by the social media company is what allowed members of the Myanmar military to continue creating accounts in the lead-up to the country’s November 2020 elections and lay the groundwork for the coup.

Prof. Domino continued by relating that it was 10 days after the February 1 coup that Facebook started to limit the content from accounts and pages run by members of Myanmar’s military. And it was only in the third week of the coup that Facebook started “indefinitely” banning the military accounts and pages in a similar action to Trump’s ban, yet retained 23 accounts and pages operated by the military, with a significantly limited reach. She contrasted this with how Facebook responded against the January 6 insurrection in the United States. Ultimately Prof. Domino concluded that while proportionality of a response is difficult to evaluate, in the context of Myanmar the actions taken pale in comparison to those taken in the United States after the Jan. 6 coup.

She also shared some of her perspectives on the introduction of friction by social media companies, like how YouTube demonetizes videos, before violent action occurs. While agreeing that it could significantly help, Prof. Domino believes that social media companies should also consider how they approach the idea of giving access to leaders with a poor track record of human rights abuses, especially in illiberal or authoritarian countries. Perhaps, she ventured, that de-platforming would have been unnecessary had Facebook implemented friction measures when it entered the market in 2012-2013.

Other panelists in the event included J.M. Berger, Author and Ph.D. Candidate at Swansea University, Jared Holt, Resident Fellow of the DFRLab, and Emma Llansó, Director of the Free Expression Project at the Center for Democracy. The panelists agreed that de-platforming by social media sites has been for subjective reasons and have had an inconsistent application. They also discussed that while the move by governments to enact legislation to restrain social media companies is welcome, there is a risk in empowering governments to restrict free speech. Another problem is that data that could be used for research into better means of content moderation is not accessible; nevertheless, access to such data brings a host of legitimate privacy concerns.

[1] Nick Clegg, ‘Oversight Board Upholds Facebook’s Decision to Suspend Donald Trump’s Accounts’, Available at https://about.fb.com/news/2021/05/facebook-oversight-board-decision-trump/ May 2, 2021.

[2] Jenny Domingo, ‘Beyond the Coup in Myanmar: The Other De-Platforming We Should Have Been Talking About’ Available at https://www.justsecurity.org/76047/beyond-the-coup-in-myanmar-the-other-de-platforming-we-should-have-been-talking-about/ May 11, 2021.

on the upper right corner to select a video.

on the upper right corner to select a video.